Why Binary Descriptors?

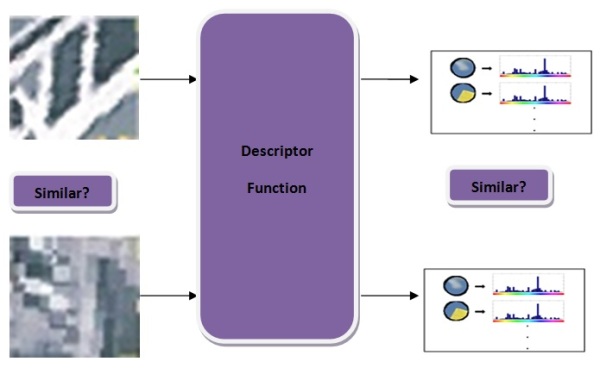

Following the previous post on descriptors, we’re now familiar with histogram of gradients (HOG) based patch descriptors. SIFT[1], SURF[2] and GLOH[3] have been around since 1999 and been used successfully in various applications, including image alignment, 3D reconstruction and object recognition. On the practicle side, OpenCV includes implementations of SIFT and SURF and Matlab packages are also available (check vlfeat for SIFT and extractFeatures in Matlab computer vision toolbox for SURF).

So, if there no question about SIFT and SURF performance, why not use them in every application?